In this article, we are going to see how to find unused load balancers - based on Cloud Watch usage metrics like request count, active flow count

We are going to get the usage metric from CloudWatch API using Python Boto and write it as a CSV report for all types of load balancers in AWS

- Application Load Balancer ( ELB v2 - Layer 7)

- Network Load Balancer ( ELB v2 - Layer 4)

- Classic Load Balancer ( ELB v1 - Layer 4 & Layer 7)

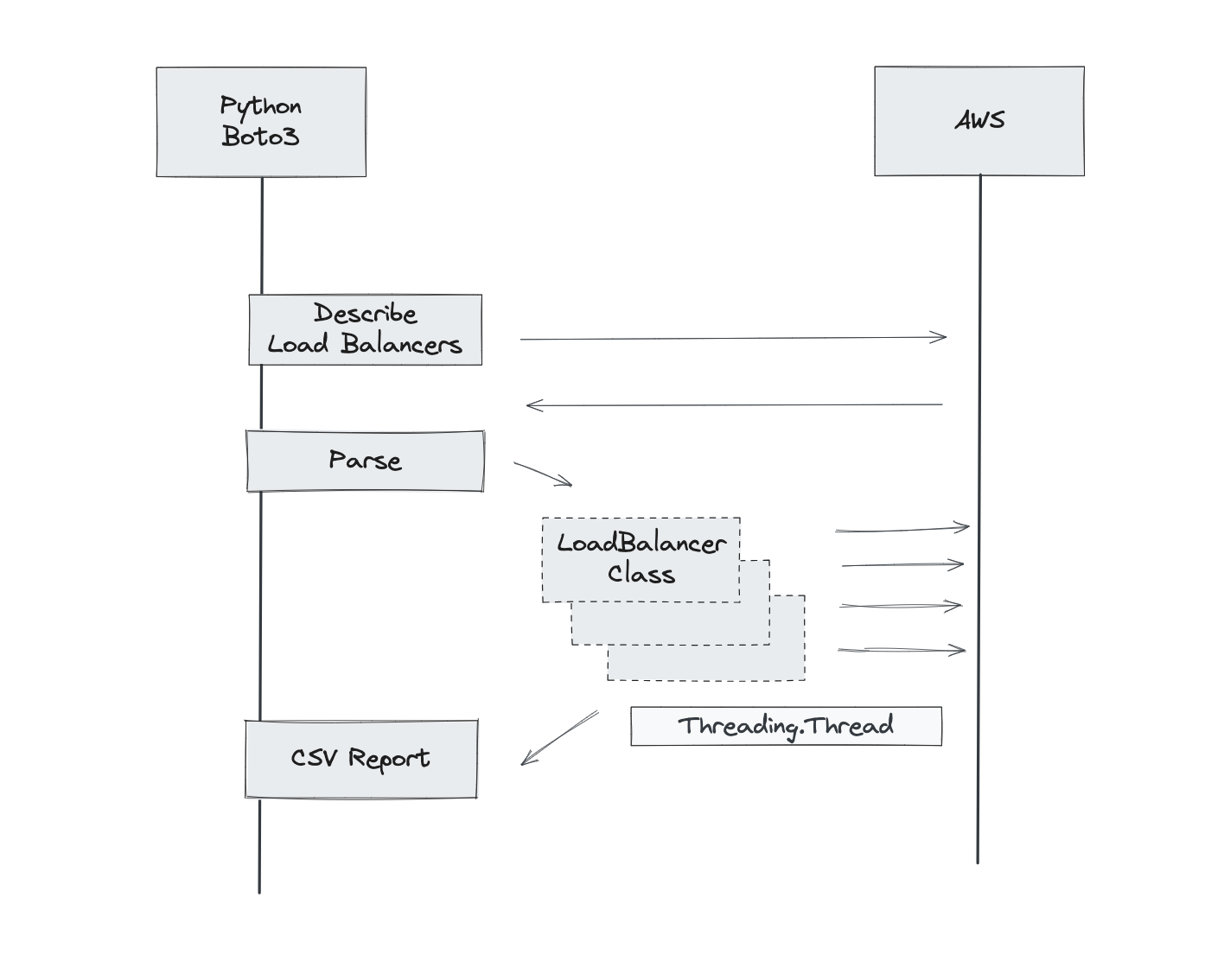

Let us quickly walk through the Flow Diagram ( Code Layer of C4 Model ) at the Class level.

As illustrated above, we are going to use Boto Python and do the following steps

- Get all the load balancers list ( CLB, ALB & NLB) using

DescribeLoadBalancermethod - Parse through the fetched list of load balancers and Instantiate the Load Balancer Class with

threading.threadimplementation for parallel execution - The LoadBalancer class contains methods to take care of calling the CloudWatch API to get the metrics and writing the results as a report into a CSV file

Prerequisites

- AWS CLI installed and Configured

- AWS Profile ( at least default) as the script assumes permission from the Environment variable

- If you do not have profiles - you can export the Key and secrets before starting the program

export AWS_ACCESS_KEY_ID=*********************** export AWS_SECRET_ACCESS_KEY=***********************

Arguments and Defaults

As a startup argument, the script accepts a report file path and number of days

- No Of Days - How many days old data should we fetch from CloudWatch

- ReportName - Full Path or relative path of the CSV file to write the report.

the script uses the boto3 method get_metric_statistics which requires set of values in the right syntax - the important parameter to highlight here is Period

The period represents the time in seconds, that controls the granularity of the returned data points

Put simply, we take the collective/cumulative value of 86400 seconds ( a day) for each metric - for the no of days

Suppose we start the script with 5 as a no of days. we get only 5 data points - one per each day.

Feel free to modify this script to suit your needs and for more information on this method - refer the article here

Source Code

Here is the entire source code as a single file.

import boto3

from datetime import datetime, timedelta

import csv

import sys

import pdb

import loguru

import threading

report_name = ""

no_of_days=1

log = loguru.logger

class LoadBalancer(threading.Thread):

def __init__(self, name, type, arn="", no_of_days=1):

threading.Thread.__init__(self)

self.name = name

self.type = type

self.arn = arn

self.cloudwatch_client = boto3.client('cloudwatch')

self.no_of_days = no_of_days

def run(self):

self.get_usage_for_period(datetime.now() - timedelta(days=int(self.no_of_days)), datetime.now())

def lb_report(self):

elb_client = boto3.client('elb')

elbv2_client = boto3.client('elbv2')

clb_response = elb_client.describe_load_balancers()

for clb in clb_response['LoadBalancerDescriptions']:

lb_name = clb['LoadBalancerName']

elbv2_response = elbv2_client.describe_load_balancers()

for elb in elbv2_response['LoadBalancers']:

lb_name = elb['LoadBalancerName']

lb_type = elb['Type']

def get_usage_for_period(self, start_time, end_time):

configmap = [

{

"type": "classic",

"metrics": "RequestCount",

"namespaces": "AWS/ELB",

"dimensions": "LoadBalancerName",

"statistics": ["Sum"]

},

{

"type": "network",

"metrics": "ActiveFlowCount",

"namespaces": "AWS/NetworkELB",

"dimensions": "LoadBalancer",

"statistics": ["Sum"]

},

{

"type": "application",

"metrics": "RequestCount",

"namespaces": "AWS/ApplicationELB",

"dimensions": "LoadBalancer",

"statistics": ['Sum']

}]

Namespace=find_config_in_map(configmap, self.type, "namespaces")

MetricName=find_config_in_map(configmap, self.type, "metrics")

if self.type == 'classic':

Dimensions=[{'Name': find_config_in_map(configmap, self.type, "dimensions"), 'Value': self.name}]

else:

Dimensions=[{'Name': find_config_in_map(configmap, self.type, "dimensions"), 'Value': self.arn}]

Stats=find_config_in_map(configmap, self.type, "statistics")

log.info(f"Calling CloudWatch with {Namespace},{MetricName},{Dimensions},{Stats},{start_time}{end_time}")

response = self.cloudwatch_client.get_metric_statistics(

Namespace=Namespace,

MetricName=MetricName,

Dimensions=Dimensions,

StartTime=start_time,

EndTime=end_time,

Period=86400, # represents the period in seconds - 1 day

Statistics=Stats

)

log.info(response)

# Value of Sum metric or set to zero - if length of Datapoints is Zero

cloudwatch_result = response['Datapoints'][0]['Sum'] if len(response['Datapoints']) > 0 else 0

report_data=[]

report_data.append([self.type, self.name, MetricName , cloudwatch_result])

write_report('a', report_data)

def find_config_in_map(lst, searchval, configkey, key="type"):

"""

This function searches for a specific value in a list of dictionaries and returns the corresponding

configuration value based on a specified key.

"""

result = [element for element in lst if element[key] == searchval][0][configkey]

return result

def write_report(mode, data, addheader=False):

with open(report_name, mode, newline='') as csvfile:

fieldnames = ['LoadBalancerType', 'LoadBalancerName', 'Metric', 'Value']

if addheader and mode == 'w':

writer = csv.DictWriter(csvfile, fieldnames=fieldnames)

writer.writeheader()

else:

writer = csv.DictWriter(csvfile, fieldnames=fieldnames)

for row in data:

writer.writerow({

'LoadBalancerType': row[0],

'LoadBalancerName': row[1],

'Metric': row[2],

'Value': row[3]

})

def list_load_balancers_and_stats():

# Create boto3 clients for ELB and ELBv2

elb_client = boto3.client('elb')

elbv2_client = boto3.client('elbv2')

report_data = []

# Get Classic Load Balancers

clb_response = elb_client.describe_load_balancers()

write_report('w', report_data, addheader=True)

for clb in clb_response['LoadBalancerDescriptions']:

log.info(f"Creating Classic LBObj {clb['LoadBalancerName']}")

t = LoadBalancer(clb['LoadBalancerName'], 'classic', no_of_days)

t.start()

# Get Network Load Balancers and Application Load Balancers

elbv2_response = elbv2_client.describe_load_balancers()

for elb in elbv2_response['LoadBalancers']:

lb_name, lb_type, lb_arn = elb['LoadBalancerName'], elb['Type'] , elb['LoadBalancerArn']

lb_arn=lb_arn.split("/",1)[1::][0]

log.info(f"Creating LBObj with,{lb_name}, {lb_type}, {lb_arn}")

lbobj = LoadBalancer(lb_name, lb_type, lb_arn)

lbobj.start()

log.info(f"Waiting for all threads to finish {threading.enumerate()}")

for thread in threading.enumerate():

if thread != threading.main_thread():

thread.join()

def main():

args = sys.argv[1:]

if not args or len(args) != 2:

print('Usage: python UnusedLBs.py <no_of_days> <report_name>')

sys.exit(1)

global report_name, no_of_days

no_of_days = args[0]

report_name = args[1]

list_load_balancers_and_stats()

if __name__ == "__main__":

main()

list_load_balancers_and_stats()

How to execute

Just copy the code save it with the .py extension and run it

python UnusedLBs.py <no_of_days> <report_name>

Hope it helps.

Cheers

Sarav AK

Follow me on Linkedin My Profile Follow DevopsJunction onFacebook orTwitter For more practical videos and tutorials. Subscribe to our channel

Signup for Exclusive "Subscriber-only" Content